The new SEO question is not just, “Can I rank?” It is, “Will an AI system trust my site enough to use it as a source?”

That is the real shift.

A lot of marketers are still treating AI search optimization like normal SEO with a few new buzzwords. That is a mistake. Google has already made it clear that content should work well in its AI search experiences by focusing on useful, people-first content and strong page accessibility.

In simple words, ranking is no longer the full game.

Now your website also needs to be:

- clear enough to understand

- trustworthy enough to use

- structured enough to extract from

- consistent enough to rely on

If your site is vague, bloated, thin, or messy, you are not only hurting SEO. You are making it harder for AI systems to treat your site as a reliable source.

That is why this topic matters in 2026.

Why “source of truth” matters in AI search now

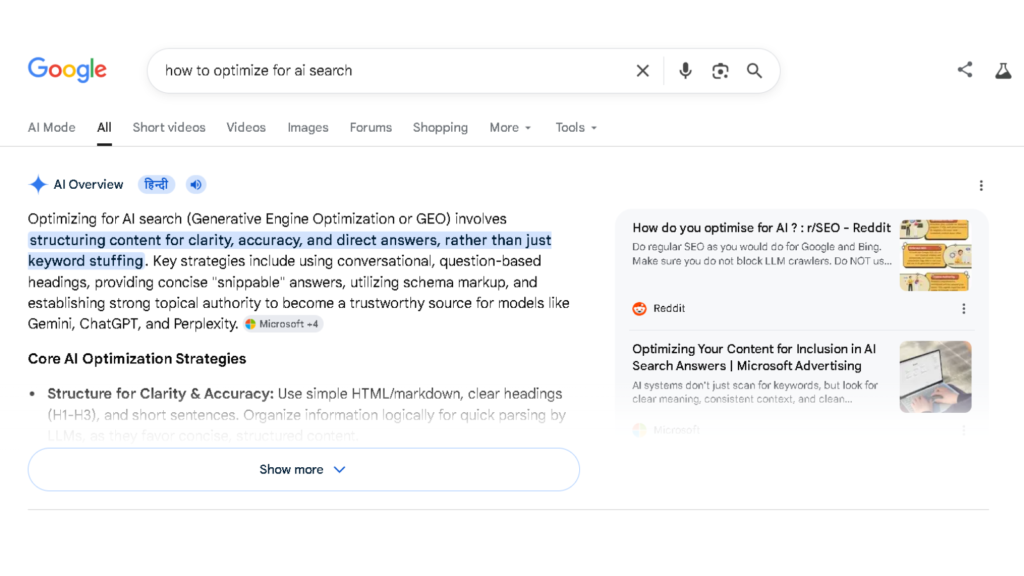

Search is changing from simple ranking to answer construction.

Google’s AI search experiences are built to help users get summarized, useful answers faster. Other AI systems are also increasingly using live web data and citations. That means your website is not just a page trying to get clicks. It is becoming part of the information layer that AI systems use to build answers.

This changes the optimization mindset.

Old mindset:

- rank for keyword

- get traffic

- publish more pages

New mindset:

- become a trusted source

- make information easy to extract

- make your site easier to understand

- give AI systems fewer reasons to ignore you

That is what “source of truth” means here.

It means your website becomes the place that is strong enough to be trusted, cited, and used.

Read More:- AI Overviews for marketers

Ranking is not enough anymore

A page can rank and still be a weak source.

That is the part many people are missing.

AI systems are not just looking for a URL to display. They are trying to build an answer. That means they need pages that are clear, consistent, well-structured, and useful enough to support an explanation.

| Classic SEO mindset | AI-search-ready mindset |

|---|---|

| Rank for a keyword | Become reliable enough to cite |

| Publish more content | Publish clearer, stronger source content |

| Focus on blue-link traffic | Focus on trust, extraction, and answer usefulness |

| Optimize title and meta only | Optimize structure, meaning, proof, and consistency |

| Chase position | Build topic ownership |

Ranking gets you seen. Source clarity gets you cited.

That is the difference.

What AI systems need from your website

If you want your website to become a stronger source for AI search, stop thinking only about content production.

Start thinking about source quality.

Clear authorship

Your content should make it obvious who is behind it, what expertise they have, and why the reader should trust the page.

That does not mean fake authority signals. It means real clarity:

- clear author identity

- strong about page

- clear topical focus

- consistent brand positioning

Entity clarity

Your website should make it easy to understand:

- who you are

- what your website is about

- which topics you actually cover

- what you want to be known for

If your site talks about too many disconnected things, your source strength weakens.

Factual consistency

One of the biggest hidden problems in AI search is inconsistency.

If one page says one thing, another page says something slightly different, and your site is full of half-updated ideas, that weakens trust.

AI systems are already imperfect. That makes clean source material more valuable, not less.

Topical completeness

Thin pages are weak sources.

You do not need to write bloated 4,000-word articles, but your page should cover the topic properly:

- what it is

- why it matters

- how it works

- what to avoid

- what the practical takeaway is

That kind of completeness makes a page more useful for both users and AI systems.

Structured formatting

Bad formatting kills clarity.

Strong source pages usually have:

- a clean intro

- clear H2s

- useful H3s

- direct answer lines

- comparison tables where needed

- FAQ sections where relevant

Good structure improves readability and extractability.

Crawl accessibility

If search engines and AI-related crawlers cannot properly access your content, your page becomes weaker by default.

That means you should avoid:

- blocked important pages

- weak internal linking

- heavy clutter

- poor page experience

- key information hidden in non-accessible formats

Original proof

This is where most generic AI content fails.

If your article says the same things everyone else is saying, why should it become the stronger source?

Original proof can be:

- a framework

- a comparison

- a real example

- a screenshot

- a practical interpretation

- a first-hand observation

You do not need fake thought leadership.

You need something that proves the article came from real thinking.

Read More:- Agentic AI in marketing

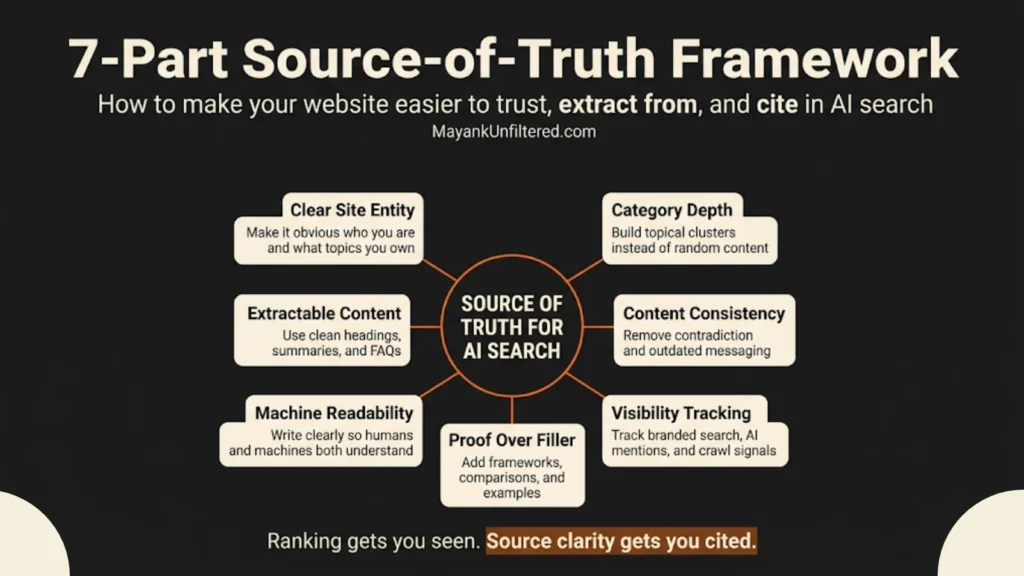

The 7-part source-of-truth framework

If you want to improve your website for AI search, this is the practical framework to follow.

1. Define your site entity clearly

Your site should clearly communicate:

- who you are

- what you do

- what topics you own

- what kind of reader you serve

Confused websites become weak sources.

2. Build category depth, not random volume

Publishing lots of disconnected content can make a site look busy, but not trustworthy.

Strong websites build depth inside focused categories.

3. Make each article easier to extract from

Every important article should have:

- a sharp intro

- clean section hierarchy

- quotable lines

- practical explanations

- FAQs where relevant

- a clear conclusion

4. Remove contradiction across the site

Audit old pages for:

- outdated claims

- overlapping topics

- unclear positioning

- inconsistent language

- stale information

A cleaner site creates stronger source trust.

5. Add proof where others add filler

Do not increase word count just to look comprehensive.

Instead add:

- one useful table

- one clean framework

- one screenshot where needed

- one real interpretation

That improves source value far more than fluff.

6. Improve machine readability without sounding robotic

Do not write for robots.

Write for humans in a way that machines can clearly understand.

That means:

- simple sentence flow

- low ambiguity

- explicit claims

- logical structure

- visible relationships between ideas

7. Track visibility beyond rankings

One of the biggest current problems is that there is no real Search Console-style reporting layer for most AI platforms. That is why broader visibility tracking matters more now.

So watch:

- branded search lift

- topic ownership

- manual AI query testing

- unusual referral patterns

- crawl behavior where possible

Add a simple line here for design handoff:

How to measure AI search visibility without proper reporting

This is where things get frustrating.

Most marketers still do not have clean reporting for AI visibility. There is no complete equivalent of Google Search Console for ChatGPT, Claude, or Perplexity visibility.

That means you need to work with directional signals.

Track branded search lift

If AI search starts exposing more people to your brand, branded searches can increase before attribution becomes clear.

Test real AI-style queries manually

Search the way actual users ask:

- best resources on AI search optimization

- how to optimize content for AI search

- best blogs on AI Overviews

- source of truth website SEO

Then see whether your site gets mentioned, cited, or reflected.

Watch referral and behavior patterns

Sometimes the clues are indirect:

- deeper page visits

- higher branded demand

- stronger engagement on strategic pages

- unusual traffic behavior

Use crawl and log thinking where possible

If AI-related crawlers are accessing your site, that gives useful direction even if it does not show the full picture.

Track topic ownership, not just single rankings

The real question is not only whether one blog moved from position 9 to 6.

The real question is whether your site is becoming more associated with a topic over time.

Add a simple line here for design handoff:

Mistakes that make your site weak in AI search

Most websites will not fail because they ignored AI search completely.

They will fail because their website quality was already weak.

Publishing generic AI content

If the article could belong to anyone, it is weak.

Covering too many disconnected topics

This weakens entity clarity and topical trust.

Writing vague opinion without proof

Readers do not trust it, and AI systems do not get much usable value from it either.

Leaving structure messy

Weak headings, no hierarchy, no direct answers, no FAQ logic, no internal flow.

Chasing trends blindly

Freshness matters. Noise does not.

Treating AEO or GEO like shortcuts

There is no real shortcut around useful, accessible, high-quality content.

What smart marketers should do next

If I were improving a website for AI search today, I would do this in order:

- Clarify the site entity

- Clean up the top 10 to 20 most important pages

- Improve structure and extractability

- Remove contradiction and fluff

- Add proof where useful

- Strengthen internal linking between related content

- Track broader visibility signals, not just rankings

That is the smarter move.

Because the future is not just search rankings.

It is search trust.

Here you can naturally add two internal links:

Mayank’s Take

Most people are still trying to win AI search with surface-level tactics.

That is the wrong game.

If your website is vague, bloated, inconsistent, or full of recycled content, AI systems will treat it the same way smart users do: as a weak source.

You do not become the source of truth by publishing more noise.

You become the source of truth when your website becomes:

- clearer

- tighter

- more consistent

- more useful

- easier to trust

- easier to extract from

That is the shift.

Not “write for AI.”

Build a site that deserves to be used.

FAQs

What does “source of truth” mean in AI search?

It means your website is clear, consistent, and trustworthy enough that AI systems can use it as a reliable source for answers or citations.

Is AI search optimization different from traditional SEO?

Yes. It still overlaps with SEO, but it puts more pressure on clarity, structure, trust, accessibility, and answer usefulness.

Can a small website become a strong source in AI search?

Yes. A focused website with better clarity and stronger proof can become more useful than a larger site full of generic content.

Do I need schema on every page for AI search?

No. Schema can help in some cases, but it does not replace strong content, clear structure, and accessible information.

How do I measure AI search visibility?

Use directional signals like branded search lift, manual AI query testing, crawl clues, referral behavior, and topic ownership.

Should I rewrite my whole website for AI search?

No. Start with your most important pages and strongest topic clusters first.

External Useful Sources link

- Google’s guidance for AI search content

https://developers.google.com/search/blog/2025/05/succeeding-in-ai-search - Google’s AI features documentation

https://developers.google.com/search/docs/appearance/ai-features - Source of truth in AI search

https://searchengineland.com/why-your-website-is-now-the-source-of-truth-in-local-ai-search-474389 - AI crawler visibility

https://searchengineland.com/log-file-analysis-ai-crawlers-search-visibility-474428